10 tips for keeping data in check

Storing data in dataverse is very expensive. Especially the data that is stored in the actual database (db data). Hence, for many customer with larger datasets, typically with some B2C type of business, it is a good practice to overviewing the data you have in the system and figure out different ways of keeping it from costing too much money.

Below are 10 tips you should consider to keep the data in check.

- Make a deletion list – and set up deletion jobs

- Flatten verbose fields

- Use virtual tables

- Use datalake with analytics

- Use datalake for archiving

- Compress complex structures

- Clean up non-production environments

- Revise datamodel

- Revise integrations

- Set lifecycle for instances

1 – Make a deletion list and set up deletion jobs

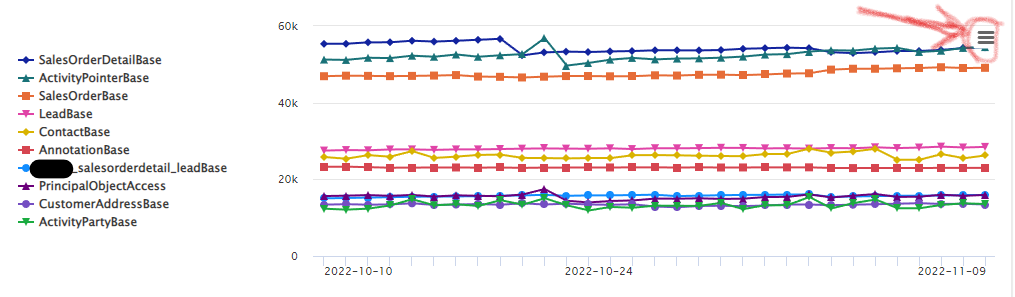

The first thing you need to start doing is to go through the biggest storage contributors. You can find which these are by looking in the Power Platform Admin Center under “Resources -> Capacity”. Open the view of each of the dataverse instances by clicking on the small chart symbol next to the instance.

You can export the full list by clicking on the hamburger symbol in the right hand corner of the graph. See picture below:

You can then start by trying to break down each table from the top. Based on the picture above, ask yourself questions like;

- Do we need to store and save all orders? By removing some orders, maybe cancelled orders, we can cut down on two of the three largest tables as the order rows are removed at the same time

- What activities are really causing activitypointer (the common table for all activities) to become so large? It is quite common that the email table is a culprit here, as the body of the emails, is usually a quite a few bytes and with thousands or tens of thousands of emails, they do add up. Marketing integrations can also bloat the activitiy pointer. I suggest investigating this further by exporting the data to an Azure Datalake and analyzing it with PowerBI. It is also quite common that not all emails need to be saved, for instance if you have email enabled queues like support@contoso.com it is quite common that you get some spam into this. Maybe searching for “unsubscribe” in email body to see if there might be some newsletter looking spam which enable you to remove them.

- Are there any patterns of contacts and/or leads that can be removed? Working with B2C you might find that there are quite a lot of contacts and leads with incorrect data or similar. Ask yourself what value a lead with no phone number and no email has… removing contact data will also remove customeraddress as there will be at least two customer address records for each contact automatically created. The same goes for leads but in that case it is the leadaddress where extra data is being created.

The point with the list is trying to identify possible patterns of data that can be deleted. I typically have one row per rule like so:

| Table | Method | Rule |

| Contact | Bulk Delete | status code = inactive AND modifiedon older than 6m |

| Bulk Delete | body contains “unsubscribe” AND modifiedon older than 1m | |

| Flatten w Power Automate | Outgoing AND subject = “Covid information” |

2 – Flatten verbose fields

Sometime it can be a good idea to just clean out or some “body” data. For example if you have sent emails to a lot of customers with the same Covid related information, the actual content of that email is the same for all and is known by everyone. Hence the body can be removed which can typically be done with a Power Automate Flow or SSIS/Kingswaysoft depending on the size of the data.

3 – Use virtual tables

Not all data needs to be stored in the expensive dataverse database. The recommendation from Microsoft is typically that data which you interact with should be in dataverse and the rest can be somewhere else. As it is now possible to use SQL as a source for virtual tables, as well as Cosmos DB and many other sources, moving data out of dataverse and accessing it through virtual tables can be a viable option.

Virtual tables is also something that should be considered when designing integrations. It is not always critical that all data actually reside in dataverse. Sometime just being able to access the data through dataverse is good enough. Especially if the data source is fast, it isn’t that much data, the data only needs to be accessed seldomly, It is also easier to use virtual tables if the data is read-only and used for reference.

4 – Use datalake for analytics

Dataverse isn’t really a good source for analytics. All endpoints (even the T-SQL) go through the application layer to the database. This makes large data crunching less than optimal in dataverse. This is also why Microsoft have developed methods for replicating the dataverse data to external stores. The just discontinued Data Export Service (DES) which synchronized data to an Azure SQL and the new Azure Synapse Link which synchronizes data to a datalake, are clear examples of this.

Hence, a good architecture for analytics of dataverse data is doing the analytics outside dataverse and the current best place for this is in a datalake with Azure Synapse Analytics.

This also has a direct implication on the data stored in Dataverse, the data you have in dataverse that is only needed for analytics, might not actually be needed in dataverse anymore. This can be anything from old orders, and of course all old communication like emails and phone calls. Datalakes are also way more efficient for doing large scale AI/ML analytics, which many organizations are looking for.

5 – Use datalake for archiving

If you havn’t already thought about it, a datalake can hence be used as an archive for data that you might need but isn’t actually something that you will use every day. Often a lot of data is stored for compliance reasons. When setting up archiving, it is important to make sure that what ever method you use, you probably want to keep the data in the archive after it has been deleted in dataverse, hence you might need to move the data from the initial storage container to the actual archive making sure to not actually move any deletes.

Microsoft have announced that they will be putting their own hot/cold-storage solution for dataverse into public preview this spring. This sounds a lot like some archiving functionality and I am certainly looking forward to seeing what it will be, how it will work and the licensing for it.

6 – Compress complex structures

For some businesses it is often needed to have quite a complex datastructure to handle the operations of what is done. It can be complex order structures with allocations of order rows to specific users or product configurations with billing, logistics and provisioning settings. Once an order has bee fullfilled in all aspects, it might not be required to store and keep all that complexity. It might just be required to keep the order header and the most critical information about what the order rows were about. Perhaps even flattened into a JSON or text field for future reference. If it is possible to move from a order header + 10 records in related tables to just a order header with a summarized text, for thousands of orders, than can save quite a lot of space.

7 – Clean up non-production environments

Data isn’t only stored in the production instances of dataverse. Many times production instances are copied and turned into test or staging instances. Integrations may be running towards development and test instances generating substantial amounts of data. Have a look at your non-production instances and check which data you actually need in them.

8 – Revise datamodel

Sometimes datamodels can become unnecessarily complex. This is most common when someone with little experience of dataverse and model driven applications design solutions. Other typical problems I have seen are repurposing of tables like leads, accounts, sales order to other simpler purposes. The problem from a dataverse storage perspective becomes that the additional storage overhead in these cases, especially in cases with businesses that have a lot of customers, can be rather drastic. Redesigning the datamodel to be more sleek can make it both more user friendly and use less storage.

9 – Revise integrations

Integrating data to dataverse can be a large culprit for data. For instance, many marketing applications generate quite a lot of data regarding the behaviors of customers. This data can then be integrated to dataverse to be used there. As behavioural data can be very granular, to the level of “Customer x has be send email y”, “customer x has read email y”, “customer x has clicked link z in email y” this data can take up substantial amounts of storage if integrated to dataverse. What options exist for this are different for different marketing applications. For ex Adobe Marketing can shut off this integration and can instead synchronize behavioral data to an Azure datalake where it can be unified with other dataverse data.

ERP integrations are also a common area of problem. ERP systems often have a very deep granualar level of data which might not be needed in dataverse/Dynamics 365 CE. Sometime just enabling deeplinking directly to the ERP system can be a better method, combined with virtual tables. However, this is not always the case and sometimes the best solution is just synchornization of the data.

However, do recognize that there should be a reason for having the data in dataverse. It should genrally be actively used.

10 – Set lifecycle for instances

Having dataverse instances with a bit unclear usage and not knowing what they are for and if they can be removed is, of course, a source of quite a lot of data consumption. By clearly assigning owners, reasons etc for all instances it makes answering the question “Do we really need ‘Internal test applications sprint 7′” or is it just an old remnant from a two year old development. This is part of the Center of Excellence starter kit and the processes that are important to set up in relation to this.

I hope any of these 10 tips have been useful. If you think so, the best way to thank me is to tweet or share your thoughts on this in social media. Also feel free to leave a comment below.

Perhaps you have some other thing that you think should be done or that I missed something. If so, please share in the comments below.

Recent Comments