API per user limits – The good, the bad and the ugly

(Updated) Microsoft recently released some throttling that have been causing some stir in the community, especially since the latest throttle, the concurrency throttling, was not very openly announced, some partners and customers were hit rather hard by it as it affected their abilities to manage large dataloads in the system.

Now Microsoft have announce another API based limitation which is based on the users and the type of licenses the have. You can read some about it here if you like. This article will discuss what this means and my personal view of the good, the bad and the ugly of it.

First of all we need to understand what it is. It is a API limit that will be set per user and based on the type of license that the user is allocated. The highest is if you have a Dynamics 365 App user license, like Sales, Customer Service or similar, which will give you 20 000 requests per 24 hours. The lowest is a Power App – Per App license which will give you 1 000 requests per 24 hours. Note that these are connected to the user and not summed/aggregated to the instance level (allthough I would think that would be a good idea). Well, really, the lowest of them all are Application, Non-interactive or admin-users that don’t use a license as these will be allocated 0.

I have not seen any UI for this yet, so I don’t know how this will look, but what the page is saying is that API-calls can be reallocated from normal users to application users/non-interactive users. (UPDATE – See update at the bottom regarding this, thank you observant readers!) Not sure if it will also be possible to reallocate API-calls between normal user and another normal user.

There will also be an additional SKU for buying 10 000 additional API calls per day that can be allocated to a user.

The Good

What is good about this then you might ask? Well, I think it is fair. Large customers pay a lot of money for their instances and usually use it a lot with a lot of integrations. It is only fair that they are allowed to use the API:s more than a small customer who has created some super duper application that blasts Dynamics with massive amounts of calls. The small customer can still do this, but they just have to pay a bit extra for those API-calls if they arn’t covering that with their users.

I also hope that this might enable Microsoft to relax the currently rather tight throttling on the API:s a bit.

According the the licensing documentation in general, existing customers will not be hit by this until October 2020, in other words, more than a year from now. This will hence probably only now affect new customers.

The bad

This implementation certainly has some bad parts. The most obvious is the too stringent connection to users which makes it weird. I don’t know how this will be managed in the UI but let’s say we have an instance with 500 users mixed Sales Enterprise, Customer Service Professional and Team Member. We also have 10 application users that are used for Portals, Forms Pro and custom integrations to many other systems. Each integration using a separate integration user to reduce the attack area in the unlikely event of a hacker attack. So what we will need to do is to first figure out how much API-usage we are using for all the normal users (for instance via PCF:s, Flows, Plugins, Workflows etc) and all the integration application users. Currently the https://admin.powerplatform.microsoft.com does not give us this granularity. There are indications but in this case one would need deep granualar data, preferably with trend analysis.

Another part of this that could be done better is the “buying addional API-calls”. Why not just adapt the method used in Azure? In other words, you pay as you go. With this current method, you have to know beforehand how much a particular user will use and if you overshoot the user will be shut down causing unnecessary support costs for customers, partners and Microsoft.

I also wonder how this practically is going to be handled? Are admins going to go into each of the 500 user records, reduce the API-calls allocated and move to Application users? If the admin moves all calls, which effectivly will stop plugins, workflows, javascripts with server calls etc how will the error handling of that look?

The Ugly

What is really the difference between something bad and something ugly? I would say that something bad is a design decision that we might dislike or might be disadvantage to the customers, it requires some sort of conscious perspective. Ugly on the other hand is the parts where where, in this case, Microsoft just have forgotten to think about something or neglected perspectives which causes issues for partners or customers. Based on this, I would say that the following are the bad aspects of this;

Timing

Again Microsoft are rolling out a change with a rather short timeframe. They probably feel that a month or two of notice by publishing the article above is notice enough, but they have to realize that many customers cannot act that fast. If you are a small customer with extensive use of Dynamics, for instance if you are using Dynamics 365 in a B2C aspect with a Marketing Automation integration and you are targeting millions of customers with sendouts and hits on your webpage being mirrored to your Dynamics all the time, this will cause some hefty API traffic. And your org might not be very big if you are totally e-commerce oriented.

Maybe only new customers, for now

Lastly I really hope that it is true that the API limitation will not affect current customers, it is not very clear and hence we are left in the dark again. If there is a problem with application users etc not being able to log in, I hope Microsoft support will be ready for the storm that will hit them.

On the other hand, new customers might have tested the system, evaluated the costs and are now faced with this. Not sure that will be optimal either, there is risk of loosing a customer or two there.

Communication

As this is a rather drastic change and may be viewed as a “breaking change” if not the one year grace period mentioned in the licensing in general applies to this. No matter, this should have been communicated very clearly months ahead to remove any kind of doubt from partners and customers. Both via blogs, emails to admins of organizations using Application users/non-interactive users as this should be easy to figure out via telemetry. Currently no one knows exactly when this will hit them/their customers or how they are to manage it.

This is generally very unclear. I shouldn’t have to write an article like this, speculating about what is or isn’t going to happen. If I have problems figuring this out, being an MVP, customers are probably very much in the dark, both existing and new.

Conclusion

In conclusion I think this is a good idea that got rushed. It should have been passed through a couple of more hoops before being launched to get the right feedback. The main things that I think Microsoft should change before rolling this out that, from my perspective, still give the same effect, are:

- Aggregate all API-Calls that are counted to a per instance level. It will make it easier to manage, stop the breaking change and make it easier to understand.

- Enable admins to add a per-use, after the fact, payment option, (like Azure) for any additional API-calls.

If this is going to be useful or not also is very dependent on the fact that we can reallocate a lot of the API-calls from users to the integration users. For instance, I have a B2C customer with 1M+ API calls per 24/h and if it will not be possible to take the sum of hundreds of users and allocate those to the application users we are using, then this will be a very hurtful change.

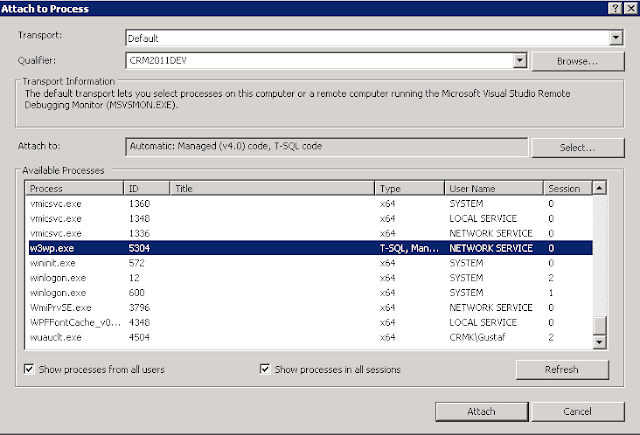

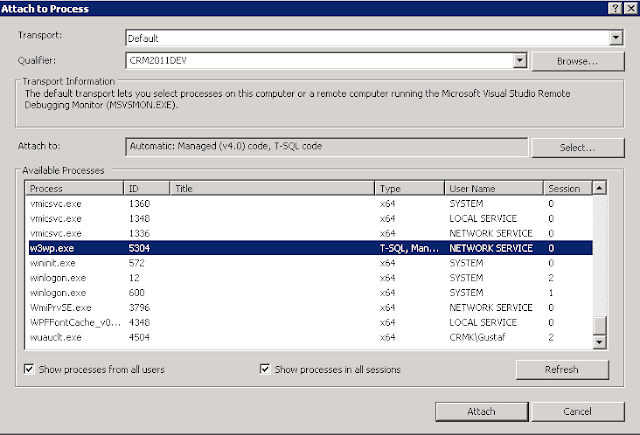

In the meantime, I do recommend that you keep a close eye to what is going on within this area as it will most likely affect you if you are running any application accounts, which you probably are, like Dynamics Portal, Forms Pro, Voice of the Customer and many more. If you go into the list of users and change view to “Application users” (or whatever it might be called in your language) you will see the list. I think Micrsoft will make some changes, or some announcements to this before October 1. Let’s see what.

Update 2019-09-04

There has been some chatter going around regarding this and do note the comments below which include interesting links and good thoughts. There are some additional points that need to be pointed out. Instead of changing the original article I will continue to add updates like these.

Normal UI usage will count

Initially I did not think that normal UI usage would count towards the API request calls. With “normal” in this case, as an old Dynamics 365/CRM geek, I of course mean a model driven App, but the same also goes for canvas Apps or actually any use of the CDS, what so ever. What this will mean when a user runs out of API requests, will be interesting to see. How many requests are used when the application is used, of course depends a lot on what you do. If you switch on F12 in Chrome you can check the network traffic and see for yourself.

Batching will be your friend

Using batching will from now on not only be a general best practice but also make you save money. If you use tools like Kingswaysoft this is easy to configure, to make sure that you have large batches when for instance doing CUD calls. When writing code directly, you will need to understand how to do this directly. Note that sometimes this will require entire rewrites of the code. I have seen programs off the shore of Orion that you wouldn’t believe with tons of single queries instead of one single call. Most often written by devs who have no or very little experience of writing code towards Dynamics 365/CDS.

Unclear if possible to move API-calls

As several people here and on Twitter have commented, it is probably incorrect to interpret that API:s can be moved from normal users to application users and non-interactive users. This will cause major headaches for some customers which will be struck with lots of additonal costs. Costs that are not very welcome as the per GB cost recently increased 800% hurting especially the larger customers with massive integrations and extensive use of the system. I do, for instance, have a customer that exceeds 1M requests per day 365 days a year. This would require them to buy over 100 addon 10k API requests SKU:s, despite the fact that their 500 users gives them a total of over 5M requests per day, something they will not be using through the UI unless someone is drinking very large amounts of coffee. – NEW Update: This was an incorrect interpretation. You cannot reallocate API calls from normal users.

The price is here

The price for the 10k/24h SKU will be $50/month. This means that for a customer like mine having major integrations causing around 1M API-calls per day, this would cost an additional per month $5 000 or yearly $60 000. I sincerely hope they will relax the throttling to make it worth it. If/when they do, I will read my Macciavelli again.

Update 2019-09-05

First of all I will write a new blog article on this, when the dust settles and we know what is going on. Currently there are quite a lot of unknowns and I wouldn’t be surprised if Microsoft announced a thing or two soon. I have been told that the FAQ will be updated in a couple of days.

Batching – again

There were some discussions on if batching actually were going to be useful in this case or not. I have now gotten confirmed that a batched request will be considered as one (1) call. This is both for batched Creates/Updates/Deletes and Queries of multiple records (that would be very strange if it wasn’t one record, but I had to ask).

Data Export Service etc.

Data Export Service and other services that run under the system account will not count towards the API request. This is good news as this opens up for many users to be able to use this method to offload the API:s for reads.

What is the competition up to

I checked to see how SFDC are handling this and as far as I can see they have a similar setup as can be read here:

and here

https://support.geckoboard.com/hc/en-us/articles/216804218-I-ve-hit-my-Salesforce-API-request-limit

I am no expert on their licensing model, but I think it is good to know that this isn’t just a PowerPlatform thing. However, there are some distinct differences:

- The API calls are not counted for normal browser/client usage. Only “real” API calls.

- They have real enforcement blocking an entire instance/org if they overshoot

- All API:s per user license are summed up to the org level

Microsoft Addon apps will include request

If you buy Dynamics Portals, this will include some additional licenses. The same goes for Forms Pro. Hence there should be some default API request assignment to those application users that are installed. I do wonder if it would be financially beneficial to piggyback on those application users? There is also no current method for ISV:s to bundle API-requests into their product if they install an application user upon installation.

CSP / Distributor silence

We have still heard nothing of the 10k addon SKU from any distrubutor, EA or CSP. It will be interesting to see if it will reach the entire distribution chain by October 1 when customers will start being notified that they are in violation (new customers).

Recent Comments