by gustaf | May 14, 2021

As I mentioned in my previous articles, I am trying to investigate the details of how the entitlements and API Service Protections are working and are planning to be rolled out (in the case of entitlements). I had a very interesting call with some of the nice people in the product team last which shed some more light on the entitlement issue and the best practice of how they suggest the API is to be used. The suggested method is that the API request load be spread out over the different users in the instance/tenant using impersonation. I will walk through what this means and what I think about this in the article below.

First, if you have not read my previous post on entitlement, I do suggest you do this first. It describes what entitlements are compared to the API Service Protection. I still see a lot of people mixing these up and that is not strange, but they are two different aspects of this, and we need to keep track of what we are talking about.

As mentioned in that article, the point of the enacting the Entitlements, when that is coming, which still is a bit unclear, is so that the compute consumed by a small organization is proportionate compared to a large organization. So, let us go back to the actual per-user licenses and have a look at an example.

Let us say we have a 5 000 Sales Enterprise org, that means that we get:

- 5 000 users who each have 20 000 API request entitlements.

- 100 000 API Requests for non-licensed users.

Compare this to a 10 Sales Enterprise org which will have.

- 5 users who each have 20 000 API request entitlements.

- 100 000 API Requests for non-licensed users

Both these are totally independent of how many instances the first or the second org has.

The first observation is of course that the 100k API Request for non-licensed users do not scale at all with the size of the organization or the number of users. How does this then go in-line with the goal that a large org should have more compute than a small? The second observation is that 20 000 API requests, which actual also the normal UI will be using, is very large. You would have to be one busy salesperson to be able to generate 20 000 API requests manually in 24 hours, so busy I am tempted to say it is virtually impossible to break unless you have very heavy automations running under your account. This was also what the Microsoft rep I talked to mentioned, that this large number is to be used on a per user basis. Hence the natural question was, if we use impersonation in the API, will the Entitlements honor that? The answer was unequivocally: yes.

Hence, this is the clear answer on how we need to create future integrations. We need to spread the load using impersonation over many of the users in the system.

Microsoft docs on how to do impersonation

If we do this the right way, it would probably be possible for most organizations to, over time be able to build a fix for this.

However, it will not be easy as we need to have a tight control of the privileges of all the users. Let me give you an example from a customer I work with:

They are an online travel agency and have people working at the destinations with very restricted privileges. A lot of bookings (orders) are integrated from the booking systems, these should hence be spread out over many users instead of the single application user being used today. There is not natural user to direct the bookings to, as it is a B2C business, and no person at the travel agency “owns” these customers per se, so the load needs to be distributed in a more randomized fashion. So, let us say we have these users:

- John Smith – System Admin (Full access)

- John Doe – Power User (can create orders but not refunds)

- John Surf Dude – Destination Specialist (can view but not create orders, cannot even read refunds)

When rebuilding the integration, we can use user John Smith and John Doe but not John Surf Dude and the only way of generically knowing this is checking what we want to do and comparing this to the privileges of each user to get a shortlist of users that can be used for integration.

However, we do not want to use a user that is close to 20k API requests for that day, so we might need to query the current API Request entitlement usage per user, so that we can filter the current shortlist to an even shorter list before knowing which users to use for impersonation.

Analysis

- A way forward. I think this can be used, although there are some tricks to it. For my customer we might be able to cut a significant amount of API calls this way which will make a huge difference when we compared to not using this technique.

- Impersonation not always viable – as in the example above, when there is not obvious owner to link to, we need to figure some other logic out of how to spread the API entitlement load. And things start to become tricky.

- Impersonation is complex.

- More complex dependencies on security model

As mentioned above, trying to execute an action as a user that does not have the correct privileges won’t work, so we need to know that first. And setting everyone as System Administrator just will not work.

- Logical user or just random users – trying to map the users to some logical connection from the other system or just randomizing the load. Logical user is probably preferrable but probably will not be a very common pattern.

- Integration often system-to-system not user-to-user

- Integrations are more often done on a system-to-system basis, not user-to-user basis. When looking at CRM-ERP integrations for instance, the user base of these two systems seldom overlaps except for a few users.

- Takes time to refactor code to handle impersonation – There are many organizations out there with numerous complex integrations. And changing integrations on this level will require significant work to be done and the question will be if there is time to complete this work before the entitlement feature goes to GA?

- Strange audit trail – if we use randomized users to update or create data in dataverse that will undoubtedly create very strange audit trails, created by and modified by fields. These are some facts that need to be taken into consideration.

- Power App – per App users have very few requests – Not all licenses have 20k API requests per 24h. The Power App per App has only 1000 API Request entitlement per 24h, these can run out just by a using the system heavily. So do consider the API Entitlements when looking at the licenses.

- Still not GA – Entitlements have still not gone GA. Hence the best time to let Microsoft know what you think is good or bad about this is now. But do be civil, there will be some feature like this, that will handle fairness management of compute consumption. Contact Microsoft through your local User Group, your local MVP or via the comment below or send me a message on LinkedIn and I will put you in contact with the right people. You can also submit an idea to the idea portal.

- There might be a point to binding all entitlements to users, in the case that if, in the future, any overshooting would not only result in angry emails, but service degradation or shut-off for that user. Imagine having creative citizen devs creating some infinitive looping Flow or massively recursive logic unknowingly which causes a lot of requests. This approach would then just cause a block for that user, not the entire tenant. Significantly reducing the severity of the problem.

Suggestions

Personally, I think this method is just way to complex. I think just having a simple pooling on the tenant level of all the API entitlements would be fair and then deducing all usage from this. I think that Microsoft could skip the 100 000 for the non-licensed user, for simplicity. Based on the examples above, that would make:

5000 Sales Enterprise

- 5 000 users who each have 20 000 API request entitlements.

Total API Entitlement for the Tennant: 100 M / 24 h

5 Sales Enterprise users

- 5 users who each have 20 000 API request entitlements.

Total API Entitlement for the Tennant: 100 K / 24 h

And all users, and all non-licensed users use from the same pool.

As for the potential problem of creative users potentially blocking the entire tenant, I would suggest adding a “per user” API request limit, which can be changed by the admins, but by default is set at exactly the same as the entitlements. That would allow admins to reduce the limit to 10k for enterprise users, to ensure the server-to-server integrations were still enabled in a proper and entitled way.

I think this would align with Microsoft’s goals and make it easy to understand for customers and we do not have to rewrite tons of code and make strange workarounds. But maybe there is something I am missing. If so, and you see it, please leave a comment!

by gustaf | Mar 7, 2021

Some people might have heard about an industry best practice that you should never have custom columns (fields) on the systemuser table (entity) in dataverse. Is this true and why so? This article is based on my understanding of how the inner workings of dataverse works and hence what you need to think about when designing your application to not unintentionally create an application that destroys your environments performance. In short, be careful about adding custom columns to the systemuser and if you do, only add fields that have static data, ie data that doesn’t often change. Let me describe this in more detail.

First of all, I would like to give credit to a lot of this to my friend and former Business Application MVP Adam Vero, who described this in detail for me, I have also discussed this with other people and since had it confirmed but not actually seen it documented as such, why it might not be fully official. I do, however, not see any problems with people understanding this, rather the opposite.

Dataverse is an application platform that has security built into it as an integral part, there are security roles, system users, teams and business units that form the core pieces of the security in the system. As the system will often need to query data from these four tables, it has a built in “caching” functionality that per-environment loads these four tables and precalculates them into an in-memory table for easy and fast access. This is then then stored in-memory for as long as the data in these four tables is kept static, in other words, nothing is changed, no updates, no creates, no deletes.

What could then happen if you add a column to the systemuser table? Well, that depends. If this column is a column that you set when the user is created and then never change that, that isn’t a problem, as this wouldn’t affect the precalculated in-memory table. However, if the data of these columns are constantly being changed, like for instance, if you add a column called “activities last 24h” and then create a Flow which every time an email, appointment etc. is created it will increment this by one per day and reset it every night for every user. Then every time, this writes to any user, the precalculated in-memory table will be flushed and recalculated before it can be used again causing a severe performance hit that can be very hard to troubleshoot.

How would you create a solution for a the “activities last 24h”, as described above then? Well, I would probably create a related entity called userstatistics with a relationship to systemuser. In this case it could even be smarter to have a 1:N relationship to this other entity as you could then have many userstatistics per user and measure differences in activities day by day.

But wouldn’t the NLB:s (Network Load Balancer) make this irrelevant as each environment is hosted together with many others? Well, I cannot, due to NDA talk about the details of how the NLBs actually work for the online environements, but I can say this, no, it is still relevant. The NLB will make it so for performance reasons.

As for teams, it is only the owner teams that count in this equation, the access teams are only being used for sharing or other types of grouping and hence never part of this pre-calculation.

And the savvy person would then of course realize that the multiplied size of:

systemusers x owner teams x business units x security roles

Does make up the size of this pre calculated table and for large implemenations, this can give indications of where performance can start to make a difference as every time a user does not have organizational level privilige, the system has to go through the entire table to check what is right. And then of course the POA. But that table is story for another day and another article.

Just final word. The platform is constantly shifting and even though this was true and probably still is true, there might be changes going on or that have happened that I am unaware of, that have changed how this works. If I hear of this, I will let you know.

by gustaf | Mar 31, 2019

Azure Active Directory (AAD) has a feature where it allows users of foreign tennants to be granted access to the current tennant. In other word, if you are running contoso.com and a user of northwind.com would like to have access, you can add this user as a guest account in Azure. However, I have found that giving this user access to Dynamics is not fully straight forward, although, it is far from rocket science. In this article I will show how this is done.

Do note that I have heard from people in the product team that there are features of the powerplatfor that cannot currently be accessed using a guest account, I think it was Canvas Apps and Flow. I will have to try this out and get back to you (or someone else could! – I would appreciate a link back to this article) in a later article. I also do think that they are workin on this.

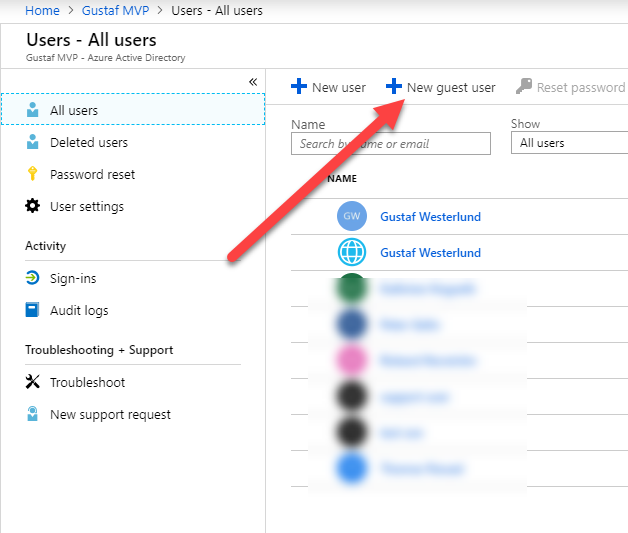

On a high level, what we need to do is:

- Add user in AAD

- Grant License

- Wait for the user to pop up in CDS/Dynamics

- Assign a security role in CDS/Dynamics

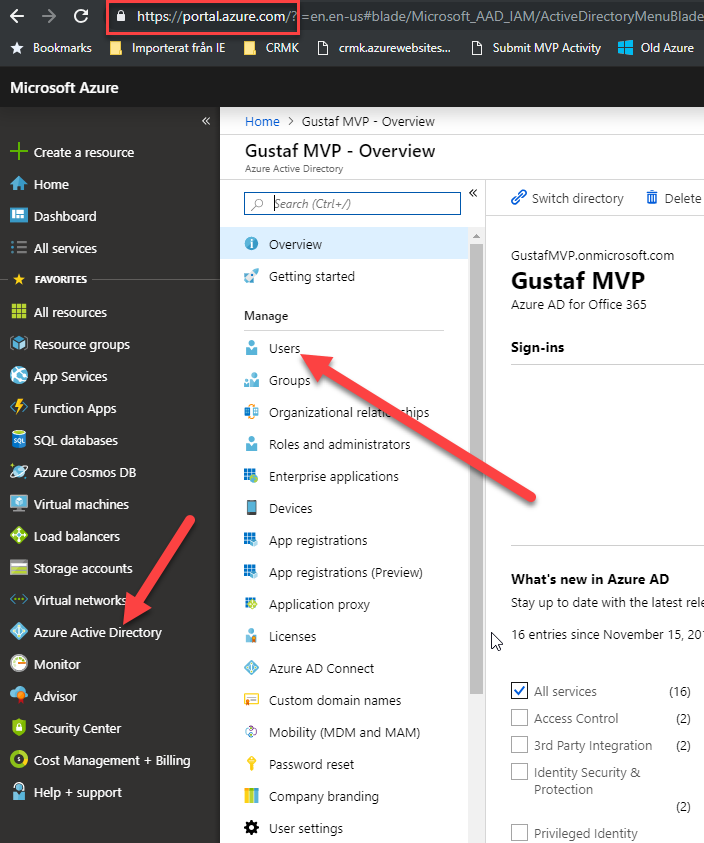

To start with, we need to go to the Azure Portal: https://portal.azure.com – and click on the AAD menu item on the left.

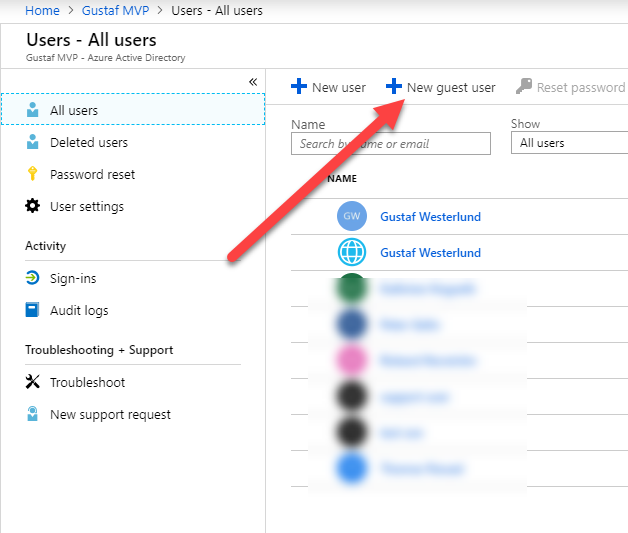

Browse to portal.azure.com -> click Azure Active Directory (AAD) -> Click Users

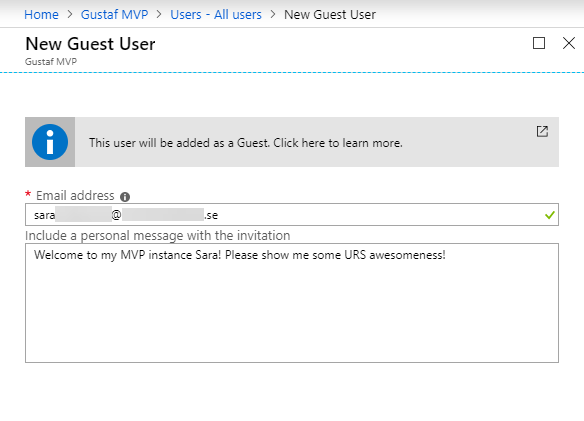

Enter the email address of the user, and perhaps some nice personal email message showing you are not some evil spammer!

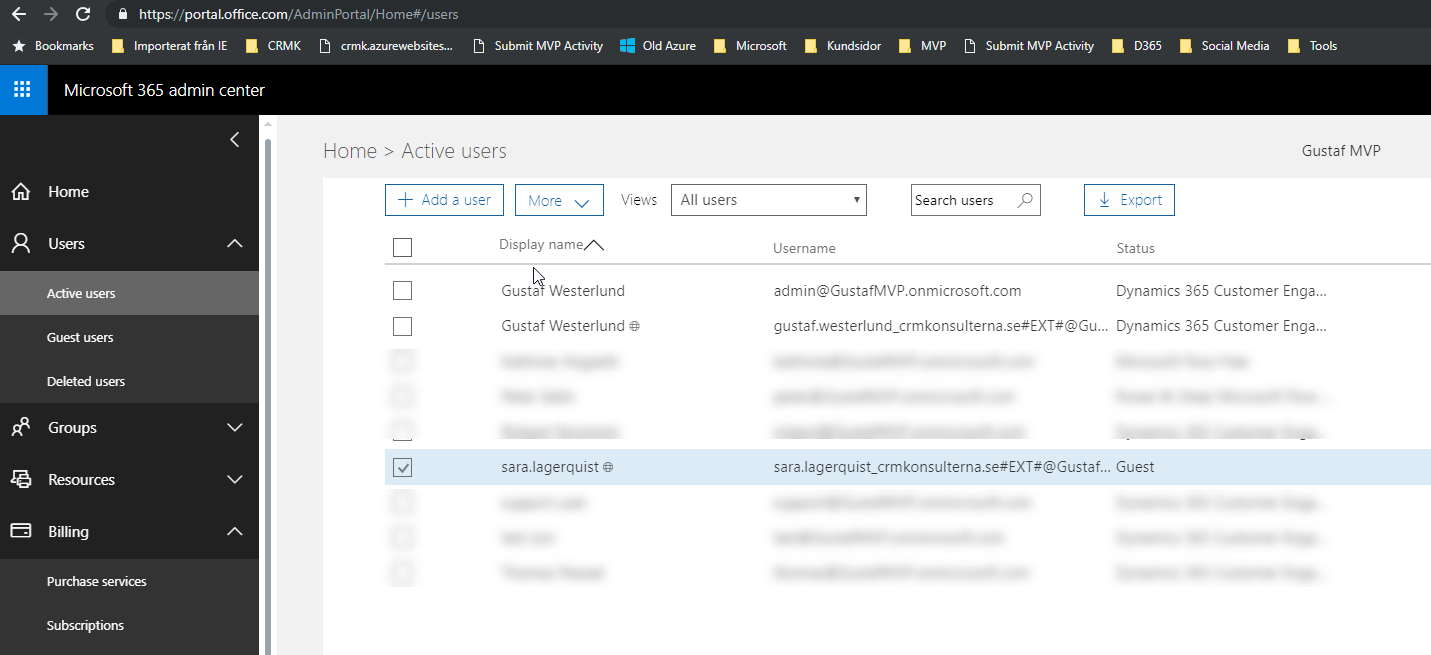

Then go to portal.office.com and you will now be able to see the new guest user in here.

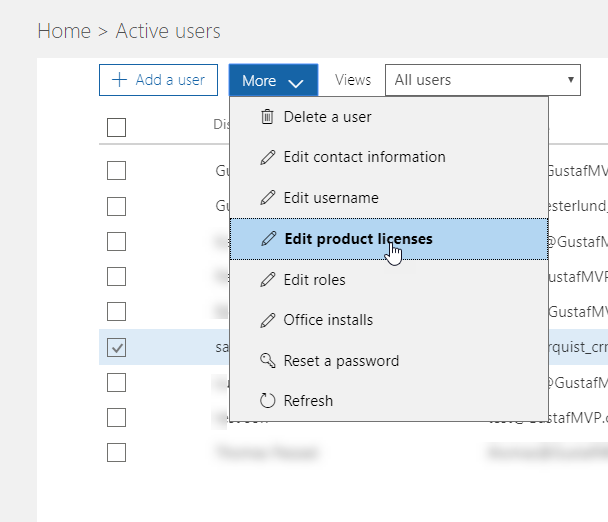

Select the guest user and click “Edit product licenses” – Note, I have not been able to set licenses directly by opening the user, only this way.

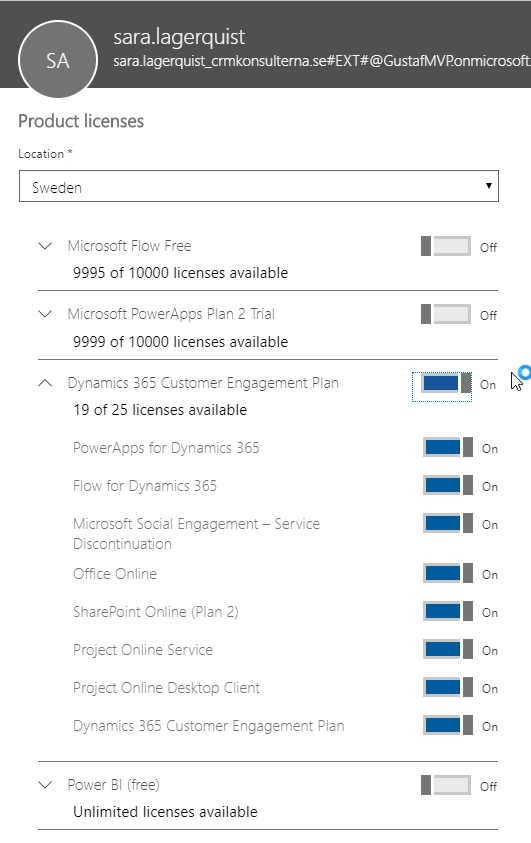

Assign the license required, P2 or Dynamics Customer Engagement App or Plan – in the example above, a Dyn365CE Plan 1 (trial)

After you have assigned the guest user a license, you have to wait a while until the asynchronous service in O365 provisions the user in the CDS. This often is rather quick, but sometimes takes more time. When I was making this, it took more than 15 minutes.

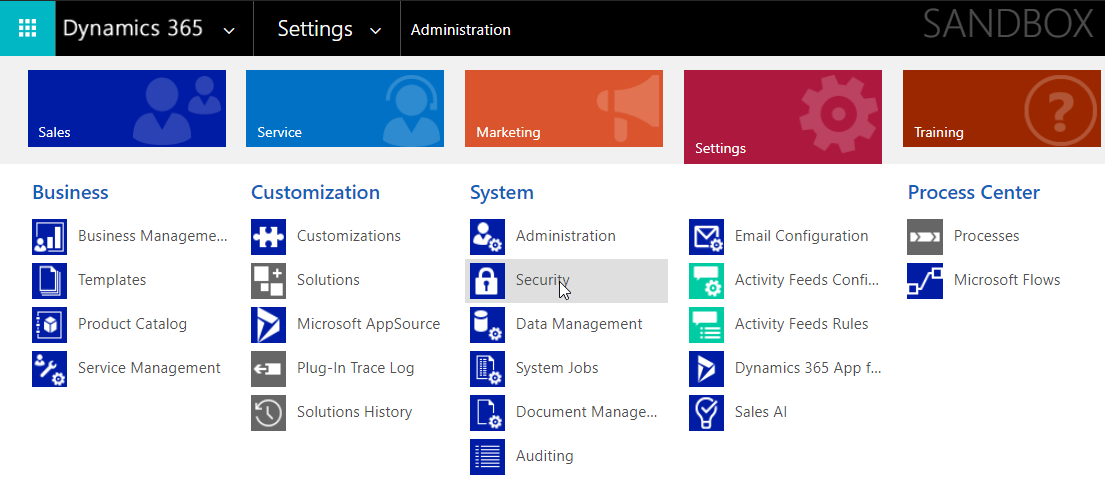

To find the user in CDS/Dyn365 go to Settings and click on Security. (Old UI)

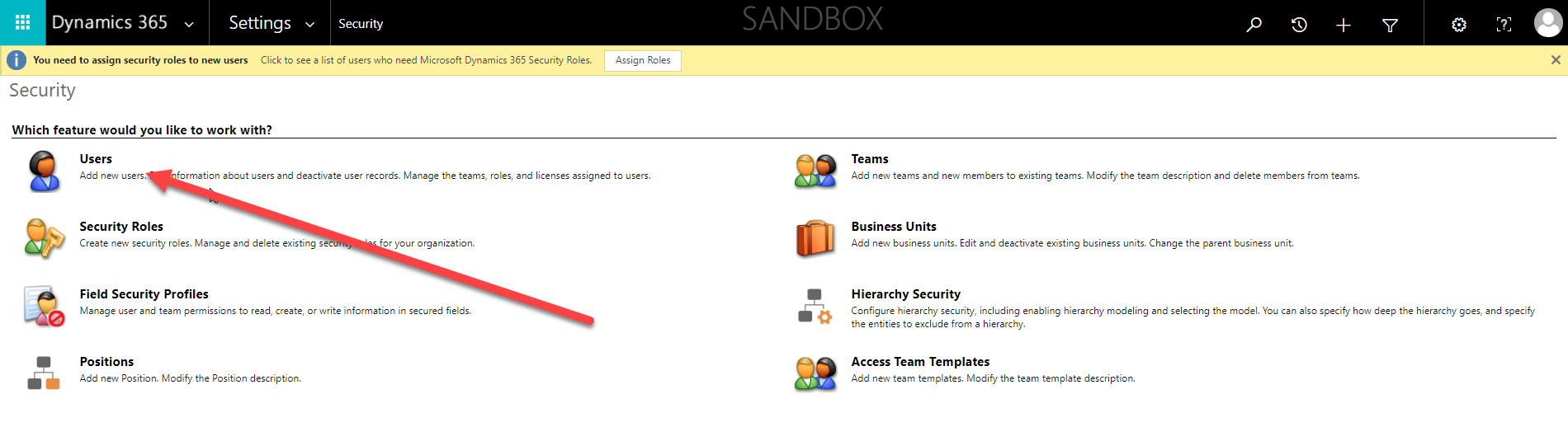

And then click on “Users” in the Security area.

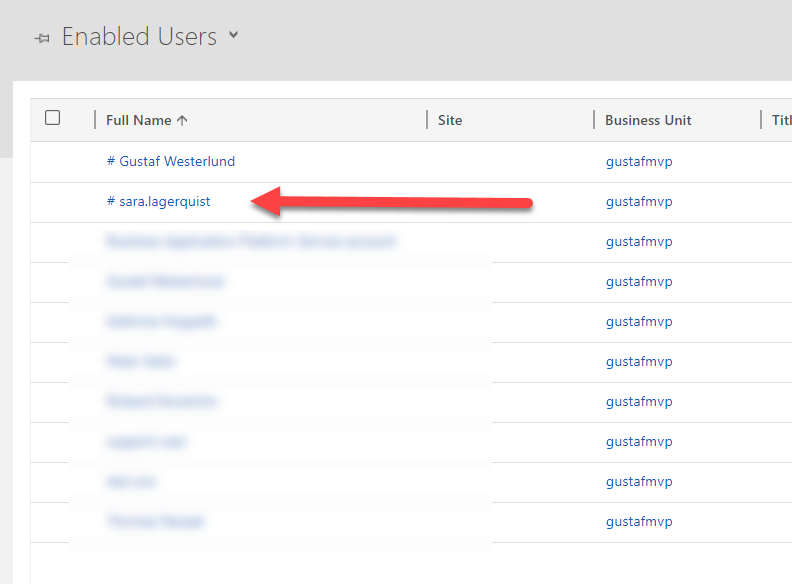

This is how a guest user look like in Dynamics 365/CDS. It has a # sign in front of it. As you can see, I have another one with my name previously created.

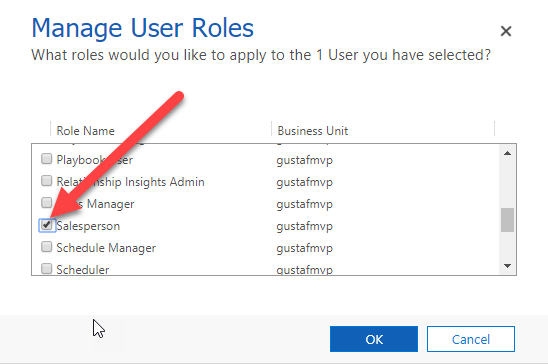

The last thing that has to be done is to grant the guest user the correct role.

After this, just give the user the direct URL to the system and they should be able to log in with their normal users.

This is a very useful method to use when setting up trials for someone as they do not have to sign in with another account to access they system. I strongly recommend it.

As mentioned in the beginning of this article, there might still be some issues with using canvas apps and Flow using guest users, so do be aware that not all features could be available.

by gustaf | Sep 5, 2018

The server side sync is a technology for connecting Dynamics 365 CE to an Exchange server. When connecting an Online Dynamics 365 to an onprem Exchange there are some requirement that need to be met. These can be found here: https://technet.microsoft.com/sv-se/library/mt622059.aspx

However, I just had a meeting with Microsoft and based on the version shown 2018-09-05, they have now added some new features that they haven’t had time to get into the documentation yet.

Some of the most interesting parts of the integration is that the it requires Basic Authentication for EWS (Exchange Web Service). Of the three types of authentication available Kerberos, NTLM and Basic, Basic Authentication is, as the name might hint, the least secure. Hence it is also not very well liked by many Exchange admins and may be a blocker for enabling Server Side Sync in Dynamics 365.

In the meeting I just had with Microsoft, they mentioned that they now support NTLM as well! That is great news as that will enable more organizations to enable Server Side Sync.

There is still a requirement on using a user with Application Impersonation rights which might be an issue as that can be viewed as having too high rights within the Exchange server. For this there is currently no good alternative solution. I guess making sure that the Dynamics Admins are trustworthy and knowing that the password is encrypted in Dynamics might ease some of that. But if the impersonation user is compromised, then a haxxor with the right tool or dev skills could compromise the entire Exchange server.

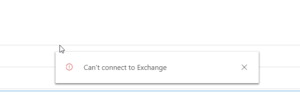

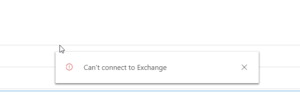

Microsoft also mentioned another common issue that can arise with the Outlook App when using SSS and hybrid connection to an Exchange 2013 onprem. It will show a quick alert saying “Can’t connect to Exchange” but it will be able to load the entire Dynamics parts.

Microsoft also mentioned another common issue that can arise with the Outlook App when using SSS and hybrid connection to an Exchange 2013 onprem. It will show a quick alert saying “Can’t connect to Exchange” but it will be able to load the entire Dynamics parts.

This might be caused by the fact, according to Microsoft, that Exchange 2013, doesn’t automatically create a self-signed certificate that it can use for communication. Hence this has to be done.

This can be fixed by first creating a self signed certificate and then modify the authorization configuration using instruction found here . Lastly publish the certificate. It can also be a good idea to check that the certificate is still valid and hasn’t expired.

I will see if I can create a more detailed instruction on this later.

Gustaf Westerlund

MVP, Founder and Principal Consultant at CRM-konsulterna AB

www.crmkonsulterna.se

by Gustaf Westerlund | Aug 3, 2014

As I have previously discussed (on Software Advice in this article) the US laws regarding the rights for the US legal authorities to order US owned companies to hand over data is very strong. Hence, if one has sensitive data that might be of interest to any government agencies in the US, one should think once or twice about storing it in a data center owned by a US company (like Salesforce.com, Amazon, Microsoft, Google etc.).

A recent case in in New York has shown that this is not on theory but very much practice. In this case regarding an email account probably on outlook.com however the difference to SalesForce.com or CRM Online is purely academic.

On the positive side, Microsoft are fighting back trying to protect their customer, something I am very happy about. I do hope they do this for all customers.

The other direct positive side to this of course, is that Microsoft CRM can be aquired from other sources than from companies based in USA. For instance a standard CRM On-premise installation, or a partner hosted installation, which there are many service providers of, like Midpoint and our my own company CRM-Konsulterna in Sweden. SalesForce.com on the other hand, do not have this option, so if you have sensitive data, be careful, it might be ordered into the wrong hands.

Gustaf Westerlund

MVP, CEO and owner at CRM-konsulterna AB

www.crmkonsulterna.se

Recent Comments